- Blog

- 64 bit harmor vst torrent

- Bold chinese fonts

- Usb card reader driver

- Cook serve delicious 2 upgrades

- Like mike watch online

- The act hulu

- Ea sports ufc pc version

- Harmor vst crack

- Motorola android imei repair tool

- You are umasou movie sub

- Chris brown end of the world song download

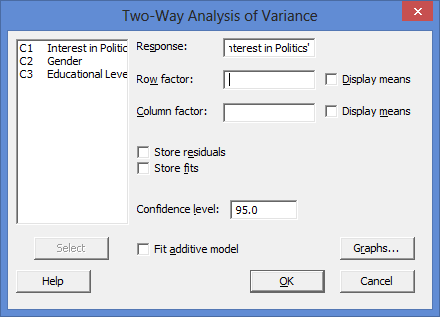

- Residuals vs the variables minitab express

- Mame 32 roms are not working

- Command and conquer red alert 3 uprising japan campaign

- Nitro type auto typer script

- The ville night club

- R drive image 6 crack

The second condition is that the data contain replicates. If the model does not include the quadratic term, then a term that the data can fit is not included in the model and this condition is met. For example, if you have a continuous predictor with 3 or more distinct values, you can estimate a quadratic term for that predictor. The first condition is that there must be terms you can fit with the data that are not included in the current model. If two conditions are met, then Minitab partitions the DF for error. Increasing the number of terms in your model uses more information, which decreases the DF available to estimate the variability of the parameter estimates. Increasing your sample size provides more information about the population, which increases the total DF. The DF for a term show how much information that term uses. The total DF is determined by the number of observations in your sample. The analysis uses that information to estimate the values of unknown population parameters. And if the value is deemed unacceptably large, consider using a model other than linear regression.The total degrees of freedom (DF) are the amount of information in your data. In general, the smaller the residual standard deviation/error, the better the model fits the data. This should be decided based on your experience in the domain. The answer is that there is no universally acceptable threshold for the residual standard deviation. The question remains: Is 9.2% a good percent error value? More generally, what is a good value for the residual standard deviation? So we can also say that the BMI accurately predicts systolic blood pressure with a percentage error of 9.2%. Moreover, if the mean of SBP in our sample is 130 mmHg for example, then: With a residual error of 12 mmHg, this person has a 68% chance of having his true SBP between 108 and 132 mmHg. Therefore, 68% of the errors will be between ∓ 1 × residual standard deviation.įor example, our linear regression equation predicts that a person with a BMI of 20 will have an SBP of: Remember that in linear regression, the error terms are Normally distributed.Īnd one of the properties of the Normal distribution is that 68% of the data sits around 1 standard deviation from the average (See figure below). More precisely, we can say that 68% of the predicted SBP values will be within ∓ 12 mmHg of the real values. So we can say that the BMI accurately predicts systolic blood pressure with about 12 mmHg error on average. And the residual standard error is 12 mmHg.Suppose we regressed systolic blood pressure (SBP) onto body mass index (BMI) - which is a fancy way of saying that we ran the following linear regression model: We can divide this quantity by the mean of Y to obtain the average deviation in percent (which is useful because it will be independent of the units of measure of Y). Simply put, the residual standard deviation is the average amount that the real values of Y differ from the predictions provided by the regression line.

#Residuals vs the variables minitab express how to#

How to interpret the residual standard deviation/error Now that we have a statistic that measures the goodness of fit of a linear model, next we will discuss how to interpret it in practice. The degrees of freedom df is equal to the sample size minus the number of parameters we’re trying to estimate.įor example, if we’re estimating 2 parameters β 0 and β 1 as in: The simplest way to quantify how far the data points are from the regression line, is to calculate the average distance from this line: Residual standard deviation vs residual standard error vs RMSE Now that we developed a basic intuition, next we will try to come up with a statistic that quantifies this goodness of fit. Mathematically, the error of the i th point on the x-axis is given by the equation: (Y i – Ŷ i), which is the difference between the true value of Y (Y i) and the value predicted by the linear model (Ŷ i) - this difference determines the length of the gray vertical lines in the plots above. In the plots above, the gray vertical lines represent the error terms - the difference between the model and the true value of Y. Therefore, using a linear regression model to approximate the true values of these points will yield smaller errors than “example 1”. This is because in “example 2” the points are closer to the regression line. Just by looking at these plots we can say that the linear regression model in “example 2” fits the data better than that of “example 1”.